As the fall semester nears its end, Michigan State University students will be asked to evaluate their instructors anonymously through the university’s Student Perceptions of Learning Surveys. Some of those students will then head to another site, Rate My Professor, to conduct a second, potentially more candid, evaluation.

Since the creation of Rate My Professors in 1999, students at MSU and elsewhere have turned to the site to inform their semesterly course selections and write reviews for incoming students.

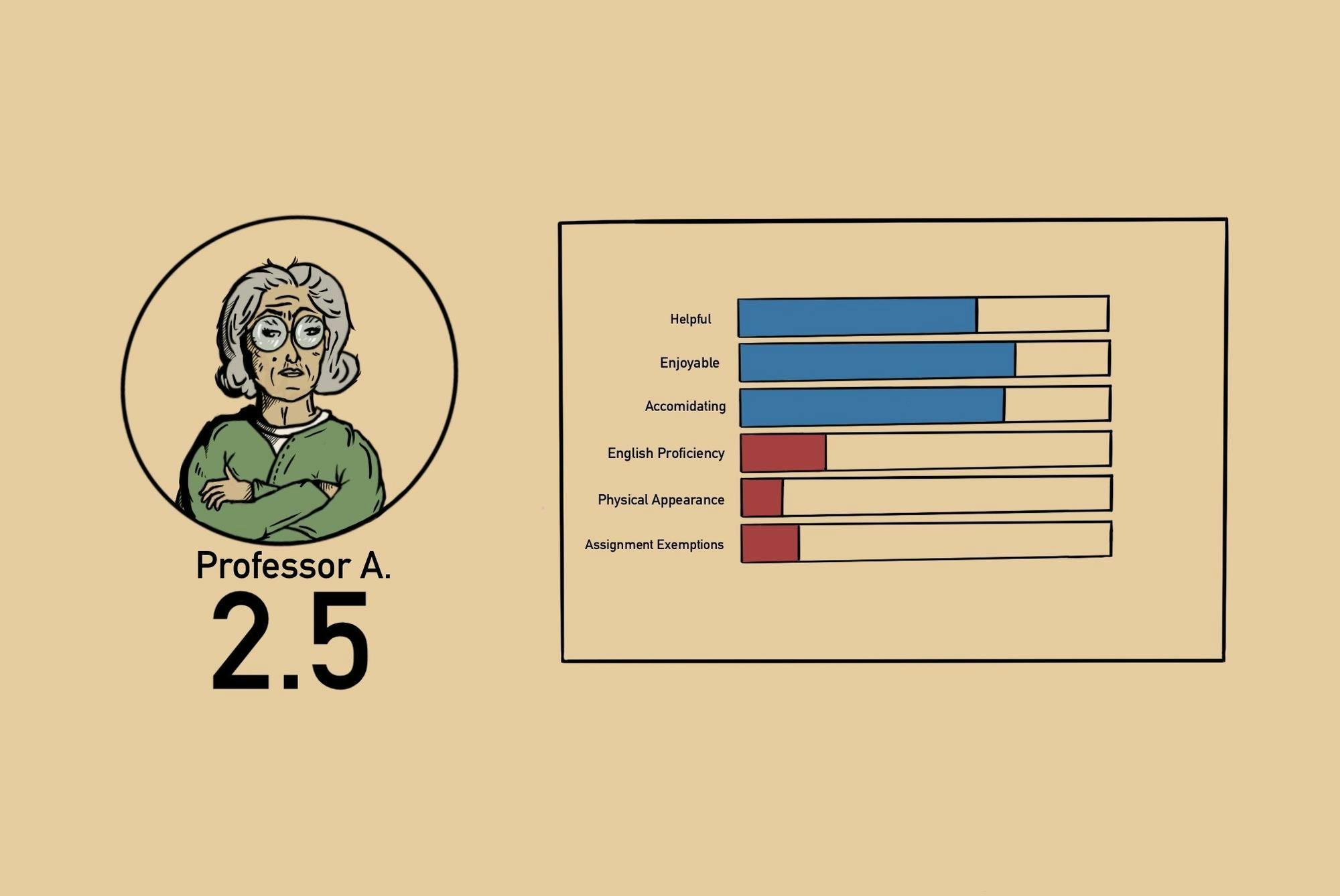

Site users can look at anonymous reviews for an instructor’s performance in any given course, including numerical scores for the sections’ difficulty and the quality of instruction, as well as pre-made tags that indicate whether a certain professor offers extra credit or their classes are test-heavy.

But while Rate My Professors has become an essential tool for schedule building — roughly 9.5 million people visited it in March, according to web traffic aggregator SimilarWeb — faculty and administrators warn that its reliance on anonymous reviews, with no way to confirm if someone is actually enrolled in a class, is ripe for exploitation. Research into the site has also found that female professors consistently receive lower scores than their male colleagues, while other papers have identified the close link between instructors’ lenient academic expectations and high ratings.

Ritam Ganguly, a computer science and engineering professor, said that when he regularly checked Rate My Professor, he noticed himself taking negative reviews to heart and considered being more lenient and giving exemptions. But, he ultimately felt doing so would go against his teaching philosophy.

“It kind of acts as a social media. Negative comments feel like they do better than positive comments,” Ganguly said. “It's very easy to open the jar of worms and be persuaded by those negative comments.”

Another study found that higher academic entitlement was related to a stronger frequency of writing Rate My Professors evaluations in tandem with frequently asking for exemptions, believing that they would receive them and intending to reward and punish professors.

One study found that Rate My Professor produced a significantly higher percentage of negative reviews than university-administered surveys did.

MSU students’ reliance on Rate My Professor has increased in the absence of university-sanctioned course evaluations being made public. While MSU says it’s working on a plan to make some data from its semesters-end survey public, some faculty argue that any type of public-facing rating system would alter incentives to prioritize pleasing students rather than delivering effective instruction.

In 2023, MSU introduced the Student Perceptions of Learning Surveys, which was meant to emphasizes evaluating personal learning rather than teaching perceptions and would allow academic programs to garner additional feedback through customized questions.

Since their introduction, students haven’t had access to SPLS survey results. This is because multiple academic years of responses are needed for reliable aggregated results, said Jessica Livingston, the director of communications within the Office of the Provost.

“That aggregation milestone was reached in 2026,” Livingston said. “The university is now working on a plan that includes data verification, quality assurance, and implementation steps within the new platform so that appropriately aggregated data from selected questions can be made available to students behind the Michigan State University Central Authentication Service (CAS).”

Although some faculty said they value student feedback, the idea of making survey results public makes them uneasy. Caroline Szczepanski, an associate professor of chemical engineering and materials science, said that when she worked at an institution where students could see course evaluation outcomes, it was a significant part of the university’s culture.

“If it starts becoming public-facing, the motivations of getting better scores are a little bit different because people are seeing this to enroll in your class,” Szczepanski said. “Am I then motivated to be lenient?”

Some students may start to expect certain outcomes in classes that are similar to what past students have reported in university surveys — and if expectations are unmet, it creates a cycle of unfounded reviews from scorned students.

Not only do professors hope for students to give constructive reviews to improve their teaching, but also because their teaching evaluations often influence career promotion. Opening results up to students could threaten the validity of future data that is used for institutional decision-making, they said.

Making some university survey results public could also influence student consumerist behavior, providing a platform for students to continue interacting with the educational system in a passive and product-driven manner.

Both Szczepanski and Ganguly recommend that students reach out to senior colleagues in their degree program through advising or other connections for in-depth course feedback and advice.

“Michigan State University encourages students to select courses based on course descriptions, learning outcomes, and academic advising to support informed, educationally grounded decision-making,” Livingston said. “Using external tools can lead to incomplete and inaccurate information about course outcomes.”

Support student media!

Please consider donating to The State News and help fund the future of journalism.

Discussion

Share and discuss “Faculty, administrators warn of rating site pitfalls” on social media.