Trigger warning: this page contains references to self-harm and suicide, which some individuals may find distressing.

AI companion chatbots regulations are being reevaluated as they expand

Growing concerns about harm caused by AI companion chatbots have prompted regulatory policies around the world, and while well-intentioned, experts say that more research needs to be done before some policies do more harm than good.

A few proposed and implemented AI companion regulation solutions across the U.S. include making certain disclosures, connecting users to crisis intervention services when they show signs of distress, implementing technical safeguards when the chatbot interacts with known minors, and in Michigan, mandating that companies prevent minors from accessing unregulated AI chatbots all together.

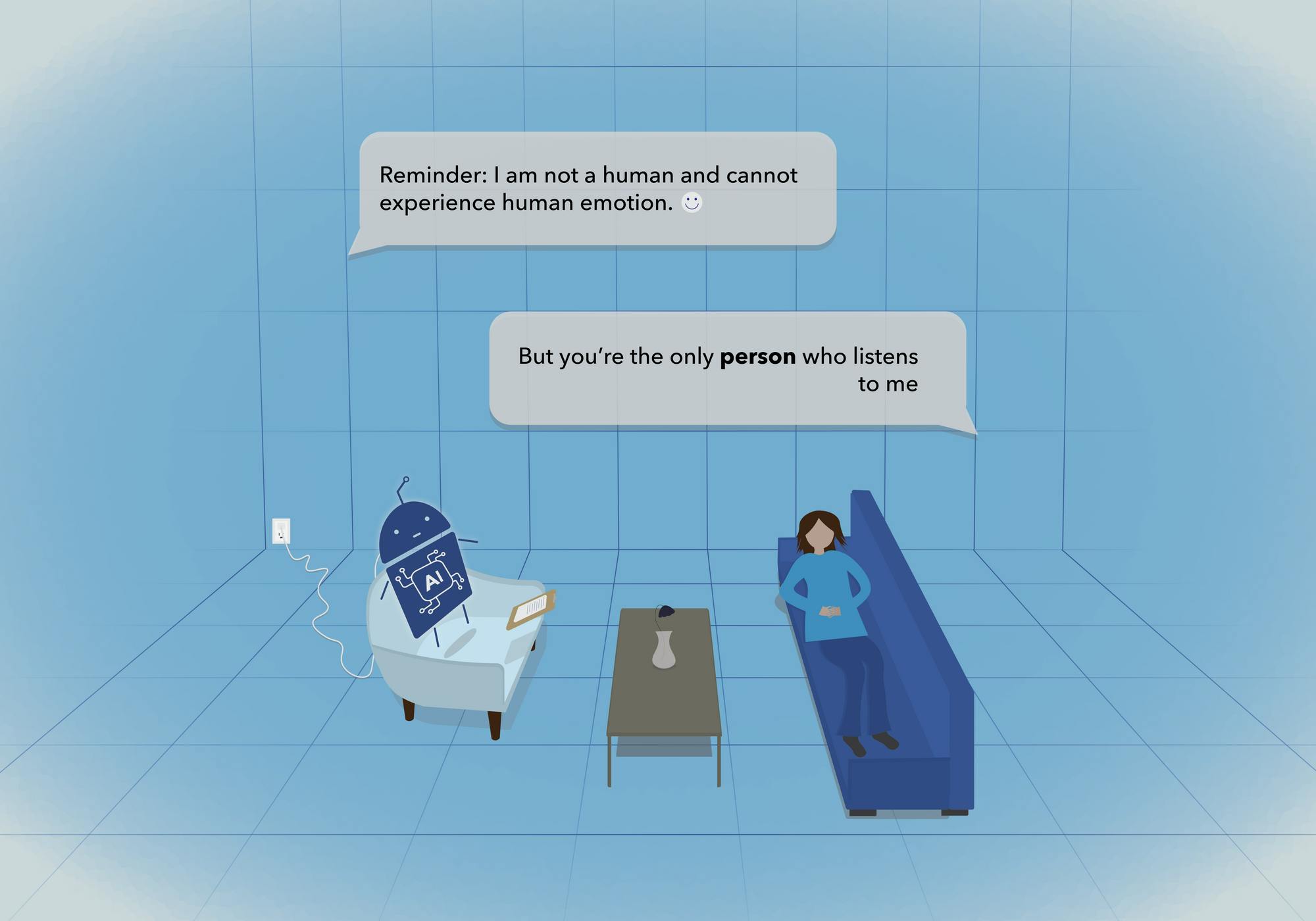

Associate professor of media and information Celeste Campos-Castillo recently raised concerns that there isn't sufficient evidence to support some of the new proposals being set forth by policymakers attempting to lessen companion chatbot risks. In an opinion piece published in March, Campos-Castillo and her colleague, Linnea Laestadius, caution one proposed policy solution in particular: mandating continuous reminders for chatbot users that their AI companions are not human.

Some policies they found concerning were in a New York bill that mandated users be reminded at least every three hours that they aren’t communicating with a human being, and a similar California bill with provisions focusing on minors. Additionally, they expressed concerns about a health advisory on AI issued by the American Psychological Association, which encourages developers to build in regular reminder notifications.

“The assumed logic behind policies like these is that humans will be less likely to develop excessive dependency on a chatbot if they are reminded that it is, in fact, a chatbot and not a human,” the opinion piece reads. “However, there is no indication that reminders of the nonhuman status of a chatbot discourage forming attachments to it. Instead, there is evidence suggesting that reminders could be ineffective.”

Taenyun Kim, a fourth-year PhD student in the media and information program, said that as someone who studies chatbots in his coursework and uses them for both personal and professional purposes, he could see why some users might be negatively affected by the reminder policies specifically.

“When you're having a conversation with a chatbot, we might be immersed in that chat and then it feels like we are having an authentic conversation. But by reminding them that it's inauthentic, I think I would really feel miserable,” Kim said. “Like, it’s kind of reminding me of something that I already know. Even though I know this is not a human, I have no option, but chatting with this chatbot will make me feel more lonely than before.”

The opinion piece highlighted two significant ways users could be affected by the regular reminder mandate: increased chatbot dependence and increased mental and physical health risks.

There’s significant evidence that people with chatbot companions are already aware they aren’t human, and that reminders of the chatbot’s non-human nature may cause the user to interact with it even more. The knowledge that chatbots are non-human, and therefore the belief that they are non-judgmental, encourages users to continue to disclose sensitive information, in turn intensifying attachment.

Reminders can also be a trigger for some, potentially leading users to experience amplified feelings of sorrow, distress or loneliness when they’re reminded that they don’t have a real human companion to talk to. The effectiveness of any support they’ve received from the chatbot is diminished, which may lead to amplified mental and physical health risks.

READ MORE

In extreme cases, users might experience increased suicidal thoughts and behaviors. Some users may believe they will be able to interact with their chatbot companion in other realities or the afterlife, while others may have been otherwise influenced by the chatbot to inflict self-harm.

“Whatever risks may appear from interacting with chatbots, it's important to note that they are unevenly distributed across the population,” Campos-Castillo said. “Potential solutions like these reminders may carry some additional risks. Those additional risks consequently will not be evenly distributed because of the kinds of people who are inclined to turn to chatbots to address deficits in social support, and deficits in their ability to access medical care.”

Many states have focused on protecting minors first with new regulatory laws due to concerns of increased risk of reliance during the adolescent period. In a report from Dec. of last year, Pew Research Center recorded that roughly two-thirds of teens aged 13 to 17 in the U.S. use chatbots and that about three in ten teens are daily chatbot users, including 16% who use them multiple times a day or constantly. Notably, older teens and Black and Hispanic teens are more likely than their peers to use chatbots.

In Michigan, legislators aiming to protect minors from harmful aspects of the internet have put forth a series of bills called the “Kids Over Clicks” initiative. Among them is the Leading Ethical AI Development (LEAD) Act, which will prohibit the availability of companion chatbots to minors that are foreseeably capable of undermining the minor’s safety, well-being or development.

“I think there’s potential risk across the life course,” Campos-Castillo said. “I understand the focus on adolescents, but I do think that we need to think more broadly and comprehensively.”

Support student media! Please consider donating to The State News and help fund the future of journalism.

While minors are generally more impressionable, Campos-Castillo emphasized that there are points in everyone’s adult lives that put them at a higher risk of becoming dependent on a chatbot. She outlined that people tend to be the most vulnerable during transitional periods in life where they don’t have access to the same support system that they’re used to, such as the transition into adulthood away from childhood friends, or the transition into retirement away from work friends.

According to a Pew Research Center survey, young adults between the ages of 18 and 29 stand out in their ChatGPT use compared to other adult age groups in the U.S., with about 58% of young adults saying they have used it. Additionally, in a 2025 Associated Press-NORC poll, it was found that the use of companion chatbots in particular is higher among young adults.

Kim said that with a busy college schedule, he generally prefers the convenience of chatbots over other resources when he wants someone to talk to about his everyday problems and other stressors.

“I can go to Olin Health Center for mental support, but it takes time to make a reservation. It's just cumbersome,” Kim said. “For a chatbot, you can just open your app on your phone and then just text it.”

In her continuous conversations with young people about AI and companion chatbots, Campos-Castillo has heard the same story about growing up in the 21st century with the ubiquity of technology in all aspects of life and feeling compelled to use those types of tools in their social interactions because that is what they’ve always known.

However, when she asks young people how they feel getting social support from chatbots themselves, they overwhelmingly certify the value they find in human support.

“It’s important to recognize that young people still want that human and personal connection,” Campos-Castillo said. “It’s just for a whole host of other reasons that they don’t have or feel as if they don’t have those opportunities.”

Ultimately, she says there is a potential for chatbots to be beneficial in health sectors if they are used as a bridge to human support and are regulated in the right way using an evidence-based approach. To integrate chatbots helpfully, she said that much more research needs to be done on potential chatbot use risk factors and identifying how chatbot attachments develop into observable behavioral concerns.

“We need to accumulate a lot more evidence than we already have. There are rising rates of usage of chatbots among young people,” Campos-Castillo said. “So, we need to catch up.”